The AWS Key That Cost 148 Million Dollars

In 2016, an Uber contractor's AWS credentials were exposed in a GitHub repository. Attackers found them, used them to access Uber's AWS environment, discovered a large dataset of driver and rider personal information, downloaded it, and extorted Uber. Uber paid the attackers $100,000 through its bug bounty program, attempted to conceal the breach from regulators, and ultimately paid $148 million in settlements when the concealment was uncovered. The CSO was convicted of obstruction.

The root cause was a secret in a code repository. The same root cause that appears in the post-mortems for dozens of other breaches. It is one of the most well-documented, consistently exploited, and persistently unresolved vulnerabilities in software development. You can read about it in breach disclosures from 2012. The pattern hasn't changed. The frequency hasn't meaningfully decreased.

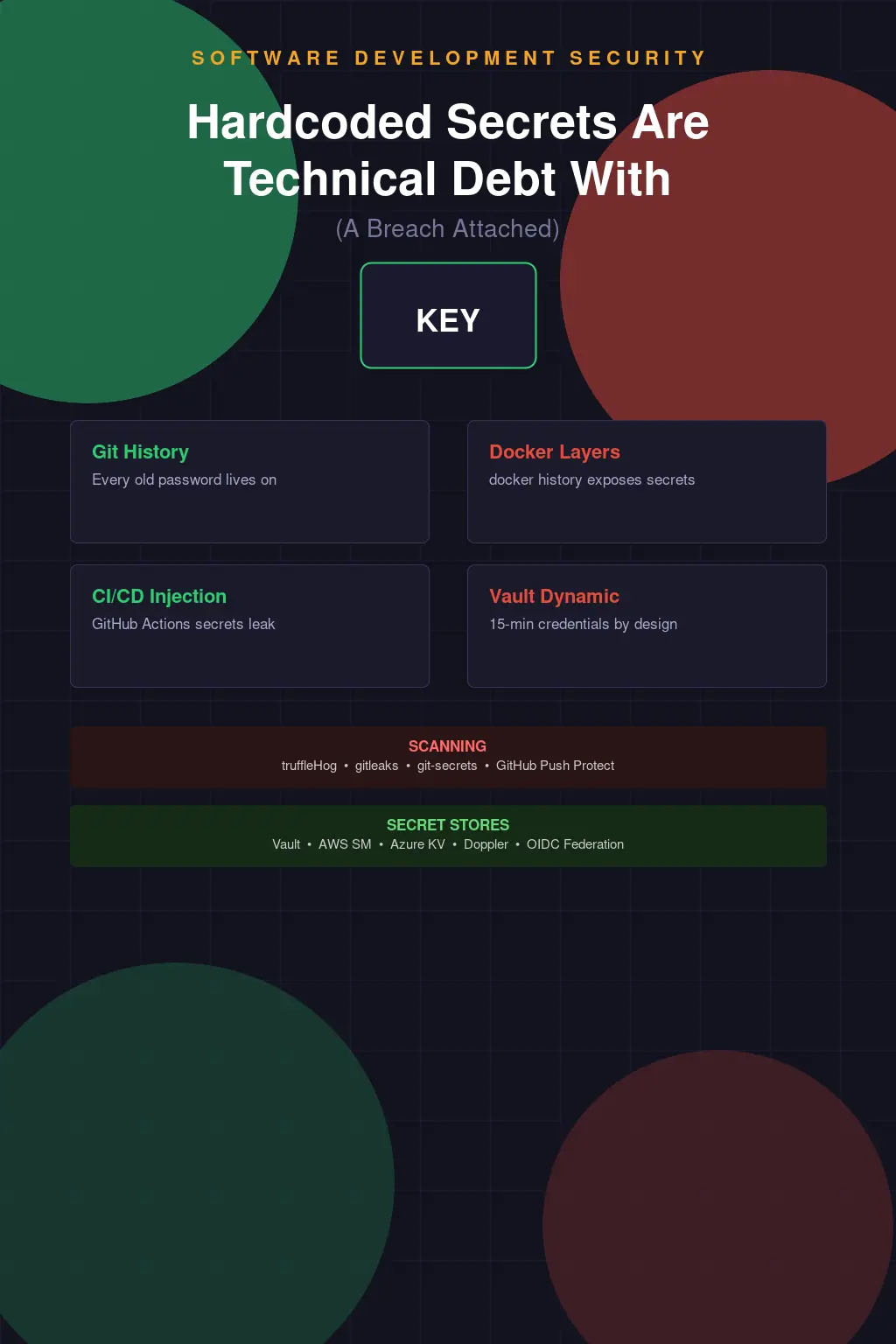

Hardcoded secrets — API keys, database passwords, service account credentials, encryption keys, OAuth tokens embedded directly in source code, configuration files, Docker images, or CI/CD pipelines — are technical debt with a specific breach vector attached. They're not theoretical vulnerabilities that require sophisticated exploitation. They're credentials that an attacker who finds the repository can use immediately, often with significant privilege, often in production environments.

The developer who hardcoded the credential wasn't malicious. They were trying to make something work. The secret went in during local testing and never came out because removing it required refactoring that would take time nobody had. "We'll rotate it later" is the phrase that precedes more breaches than any other in software security.

Git History Never Forgets

The most dangerous property of a secret committed to a git repository is not that it's currently in the codebase. It's that git history is permanent. Removing a secret from the current HEAD of a repository — deleting the file, editing it out, replacing it with a placeholder — does not remove it from the repository's commit history. Anyone who clones the repository and runs git log -p or git show [commit-hash] can retrieve the original credential.

This catches a lot of developers who think they've fixed the problem. They discover a hardcoded API key, they delete it or replace it, they push the fix. The credential is now exposed in every clone of the repository ever made, in the full commit history accessible to everyone with read access, and potentially in third-party systems that mirror or cache the repository.

Actually removing a secret from git history requires rewriting history using git filter-branch, the BFG Repo-Cleaner, or git filter-repo (the current recommended tool). History rewriting changes every commit hash downstream of the edit, which means all collaborators need to re-clone, all open pull requests are invalidated, and CI/CD integrations may need re-configuration. It is disruptive and must be coordinated across everyone who has a clone.

And even after a successful history rewrite and force push, you have to assume that the credential was already discovered and used. Cached versions of the repository may exist on developer machines, build servers, backup systems, or code mirrors that were never updated. The credential must be treated as compromised from the moment it was committed, regardless of subsequent remediation in the repository itself. Rotation is not optional — it's the first action, not the last.

Docker Layers: The Secrets Cache Nobody Checks

Container images expose secrets through a mechanism that surprises even experienced developers. Docker images are built in layers, and each layer is a diff of the filesystem from the previous state. When a Dockerfile performs operations like: copy a config file containing a secret, use the secret during a build step, then delete the config file — the secret is still present in the intermediate layer that existed after the copy and before the deletion.

Anyone who pulls the image and inspects its layer history with docker history --no-trunc [image] or extracts the image tarball with docker save can traverse the layers and retrieve files that were "deleted" in later build steps. Credentials that were removed at the end of a Dockerfile RUN step are not removed from the image — they're archived in an inaccessible-by-default but reconstructible layer.

The correct pattern is multi-stage builds: use one build stage for compilation and credential consumption, copy only the final artifacts (not the build context or intermediate files) to a clean final stage. Secrets needed only during the build process should be provided as Docker BuildKit secrets (--secret flag), which mount the secret into the build container at a specified path without persisting it in any layer. BuildKit secrets are the right architecture for build-time secrets; they've been available since Docker 18.09 and are still not universally adopted.

Container registries amplify the exposure. An image pushed to a public registry — Docker Hub, GitHub Container Registry — with an embedded secret is immediately accessible to the entire internet. Scanners like Trivy, Grype, and commercial products continuously scan public container registries for embedded secrets. The window between push and discovery can be minutes.

Finding Secrets Before Attackers Do

TruffleHog is the open-source tool most commonly used for secrets detection in git repositories. It scans commit history, the current working tree, and can be configured to scan a repository's full history using entropy analysis and regex patterns for known credential formats. It detects high-entropy strings that look like randomized secrets, and it has specific detectors for over 700 credential types — AWS access keys, GitHub tokens, Stripe keys, Twilio credentials, and many others.

Running TruffleHog against your repositories is not a one-time exercise. It's a baseline scan to understand your current exposure, followed by integration into your development pipeline to prevent future introduction. The pipeline integration is the more important investment: catching secrets before they reach the repository rather than detecting them after the fact.

Pre-commit hooks are the first line of prevention. detect-secrets (from Yelp) and gitleaks are popular choices for pre-commit integration. They run on the developer's machine before the commit is created, blocking commits that contain detected secrets. The limitations of pre-commit hooks: they run on the developer's machine, so they're not enforceable through policy — a developer can bypass them with git commit --no-verify. They're best understood as developer education and friction, not a reliable enforcement mechanism.

Server-side enforcement is more reliable. GitHub Advanced Security includes Secret Scanning with push protection, which can block pushes containing detected credentials before they reach the repository. GitLab has equivalent functionality. These controls require that your repositories are hosted on a platform that supports them and that push protection is enabled — it's not on by default everywhere and requires explicit configuration.

CI/CD pipeline scanning closes the gap between pre-commit and production. Running TruffleHog or gitleaks as a required pipeline step means even secrets that bypass pre-commit hooks are caught before the code reaches a deployment pipeline. The pipeline step can be configured to fail the build, producing a forcing function that doesn't rely on developer machine configuration.

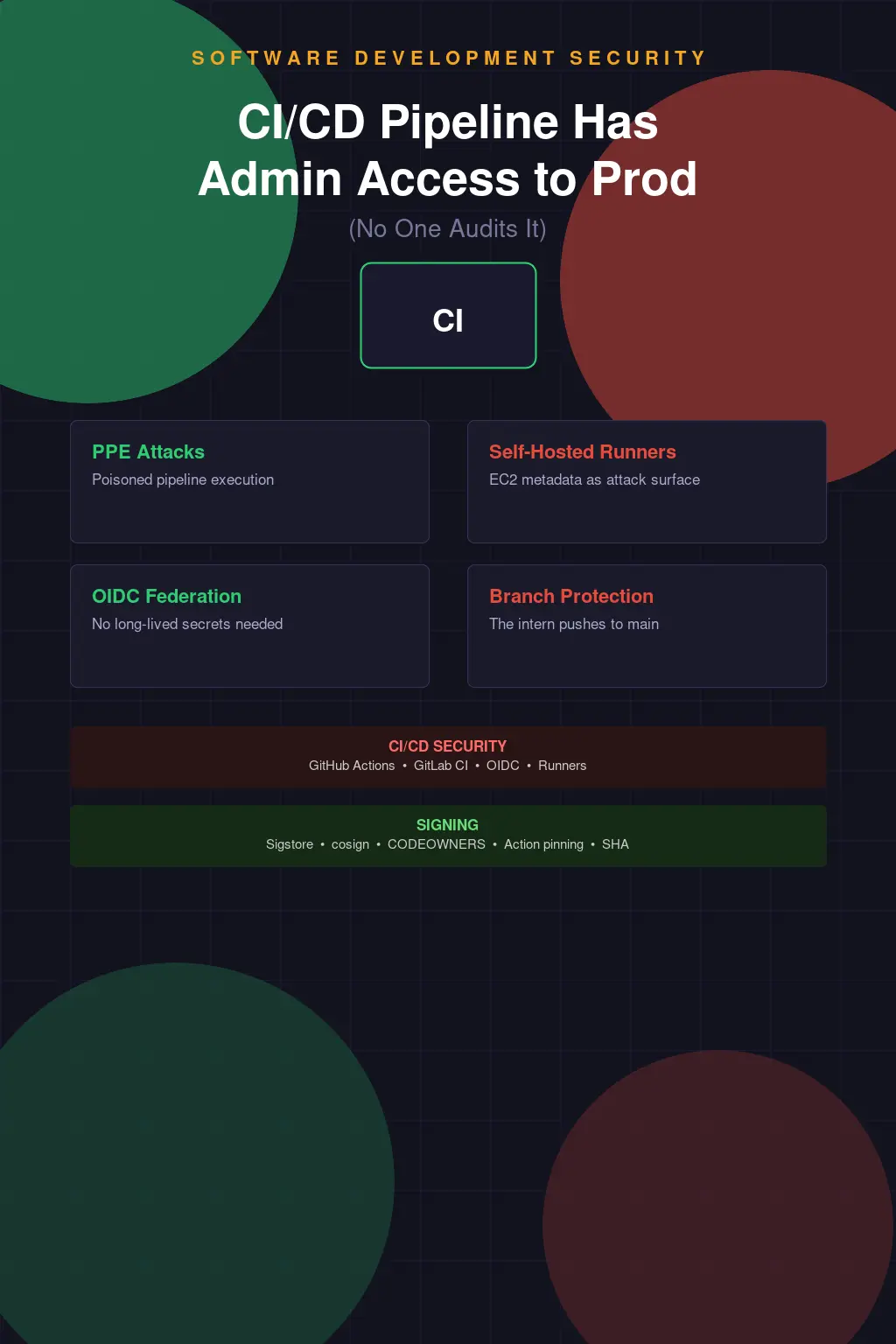

Secrets in CI/CD: The Privileged Identity Nobody Governs

CI/CD pipelines accumulate secrets at a remarkable rate. Every service integration needs credentials. Every deployment target needs access keys. Every notification webhook needs a token. In a mature development organization, a CI/CD system like Jenkins, GitHub Actions, GitLab CI, or CircleCI may hold hundreds of stored secrets — API keys for cloud providers, database credentials for staging and production environments, container registry tokens, notification service credentials, signing keys for release artifacts.

These secrets are often:

Highly privileged. A CI/CD pipeline that deploys to production needs credentials with production deployment authority. Those credentials can often do far more than deploy — they can read, modify, or delete infrastructure. The principle of least privilege is systematically violated because it's easier to provision broad credentials that work than narrow credentials that need iteration.

Long-lived. API keys and service account credentials stored in CI/CD platforms are often never rotated. They were created when the pipeline was set up and have been running continuously for years. Rotation requires updating the stored secret and testing that the pipeline still works — low-priority maintenance work that accumulates indefinitely.

Accessible to everyone who can read pipeline configuration. GitHub Actions secrets are scoped to the organization, repository, or environment, with varying levels of access control. In many organizations, secrets are stored at the organization level and accessible to any workflow in any repository, including repositories owned by contractors or third-party developers who have been granted access for limited purposes.

The right architecture uses short-lived credentials generated at runtime rather than long-lived static secrets. GitHub Actions' OIDC integration with AWS, GCP, and Azure allows workflows to request temporary credentials valid for the duration of the job without any stored long-term credentials. This eliminates the rotation problem, eliminates the exposure if the stored secret is exfiltrated, and provides better audit logging because each credential issuance is a distinct authentication event. If your pipelines are still using IAM user access keys stored as GitHub Actions secrets, switching to OIDC is one of the highest-ROI security improvements available.

Vault: The Right Architecture and Why It's Underdeployed

HashiCorp Vault is the de facto standard for secrets management in engineering organizations that have moved past static credential storage. Vault provides: dynamic secret generation (credentials that are created on demand and expire automatically), secrets engine integrations for AWS, GCP, Azure, databases, PKI, SSH, and many others, fine-grained access policies based on identity, audit logging for every secret access event, and a rich API that integrates with most deployment tooling.

The dynamic credentials capability is the most operationally significant feature. Instead of storing a static AWS access key that's valid indefinitely, an application authenticates to Vault using its own identity, requests AWS credentials, gets credentials valid for a defined TTL (minutes, hours), uses them for the task, and they expire automatically. If the credentials are stolen from memory or logs, they're worthless after the TTL expires. This changes the economics of secret compromise significantly.

AWS Secrets Manager and GCP Secret Manager offer similar functionality within their respective cloud environments, with native IAM integration that makes them simpler to deploy for cloud-native workloads. Azure Key Vault covers the same need on Azure. These cloud-native options are often the right starting point for organizations that are primarily on a single cloud provider.

The reason secrets management tooling is underdeployed despite being widely understood: migration cost. Moving an existing application from hardcoded secrets or environment variable secrets to Vault or Secrets Manager requires code changes, testing, and operational runbook updates. It's not glamorous work, it doesn't produce visible features, and it competes for engineering time with feature development. This is the technical debt framing: the migration is deferred because nothing has broken yet, and it stays deferred until something does.

Environment Variables: Better Than Hardcoding, Still Not Great

The standard advice for "don't hardcode secrets" is "use environment variables instead." This is correct in the narrow sense that environment variables separate configuration from code and allow credentials to differ between environments. But environment variables have their own exposure paths that deserve acknowledgment.

Process environment is accessible to any process running as the same user, to anyone with ability to read /proc/[pid]/environ on Linux, and to crash dumps that capture process memory. Environment variables in cloud function platforms (Lambda, Cloud Run, Cloud Functions) are stored in service configuration and are readable by anyone with IAM permission to describe the function. In containerized environments, environment variables are visible in docker inspect output and are accessible through the container runtime API to anyone with Docker daemon access.

More practically: environment variables frequently end up in logs. Debug logging that captures the process environment for diagnostics, application startup logging that dumps configuration, error handling that serializes context — all of these can expose environment variable values in places where they're not expected.

Environment variables are significantly better than hardcoded secrets. They're not an end state for secrets management in systems where the credentials have significant privilege. The end state is dynamic credential generation with short TTLs, audit logging, and least-privilege access — which requires Vault-class tooling or cloud-native secrets manager integration.

The Rotation Myth and the Breach You'll Have Anyway

Credential rotation is necessary but not sufficient. The security community sometimes frames rotation as the solution to the hardcoded secrets problem: if you rotated your credentials regularly, a compromised credential would have a short window of validity. This is technically true and operationally fantasy for most organizations.

Static credential rotation in applications with hardcoded secrets requires a deployment to push new credentials. The deployment schedule is measured in days or weeks. The rotation cycle is therefore measured in days or weeks at best, often months in practice, often never because the rotation breaks something and nobody has time to debug it right before a release.

Automated rotation through AWS Secrets Manager, Vault's dynamic credentials, or database secrets engines actually solves this problem. TTLs measured in hours or minutes mean that a compromised credential is stale before an attacker can operationalize it in most scenarios. This is the architecture to build toward. Manual rotation cadences on static credentials are not.

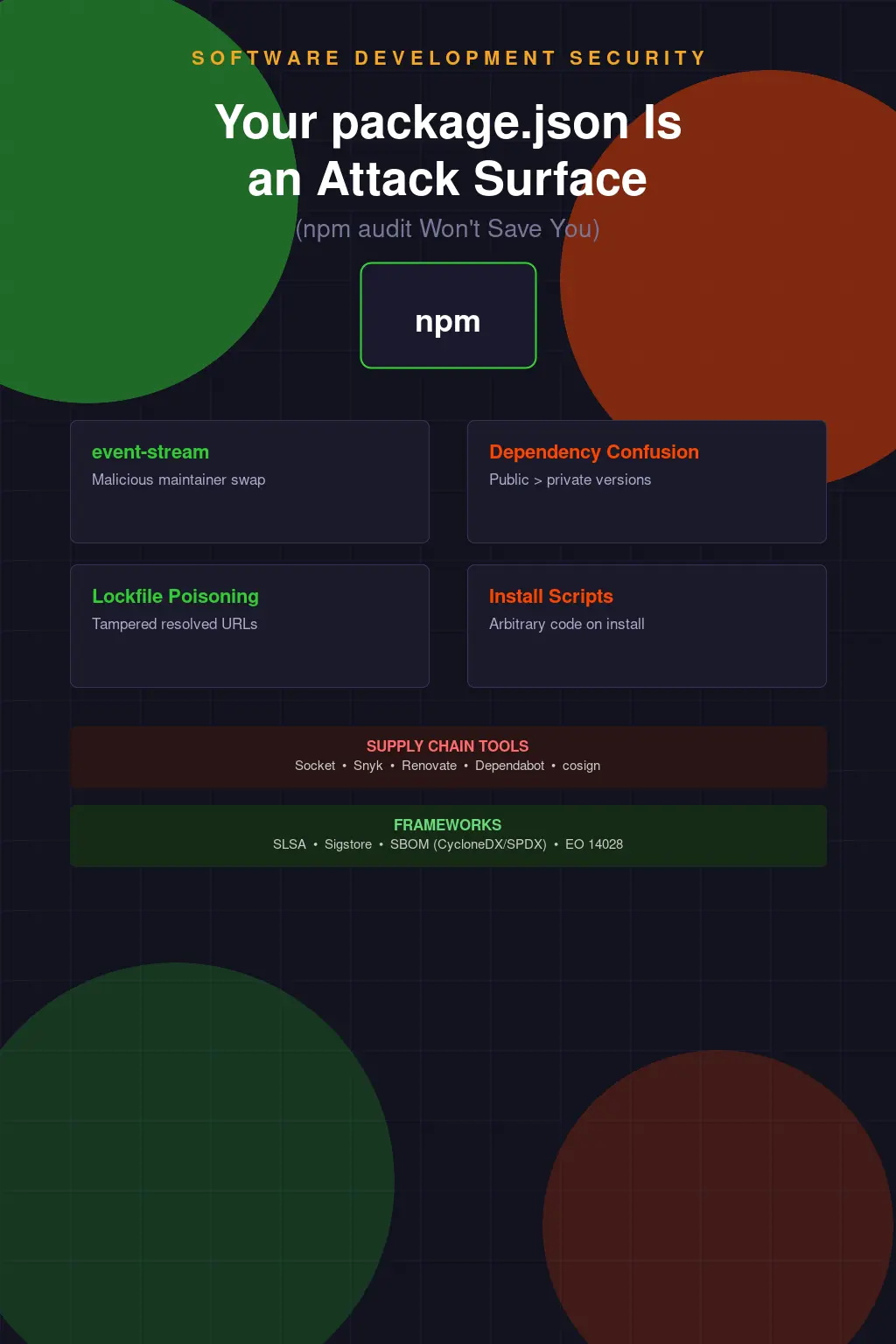

The honest summary: your codebase has hardcoded secrets somewhere. Your git history has secrets that were supposedly removed. Your Docker images have secrets in intermediate layers. Your CI/CD system has static long-lived credentials that haven't been rotated since the platform was set up. And there's a non-trivial probability that some of those secrets have already been discovered by automated scanners and are sitting in a credential database being sold on dark web markets right now.

TruffleHog. Gitleaks. Pre-commit hooks. OIDC for CI/CD. Vault or a cloud secrets manager for application secrets. These are not exotic technologies. They are table stakes that most organizations are still treating as future-state aspirations while the breach that results from their absence compounds daily.