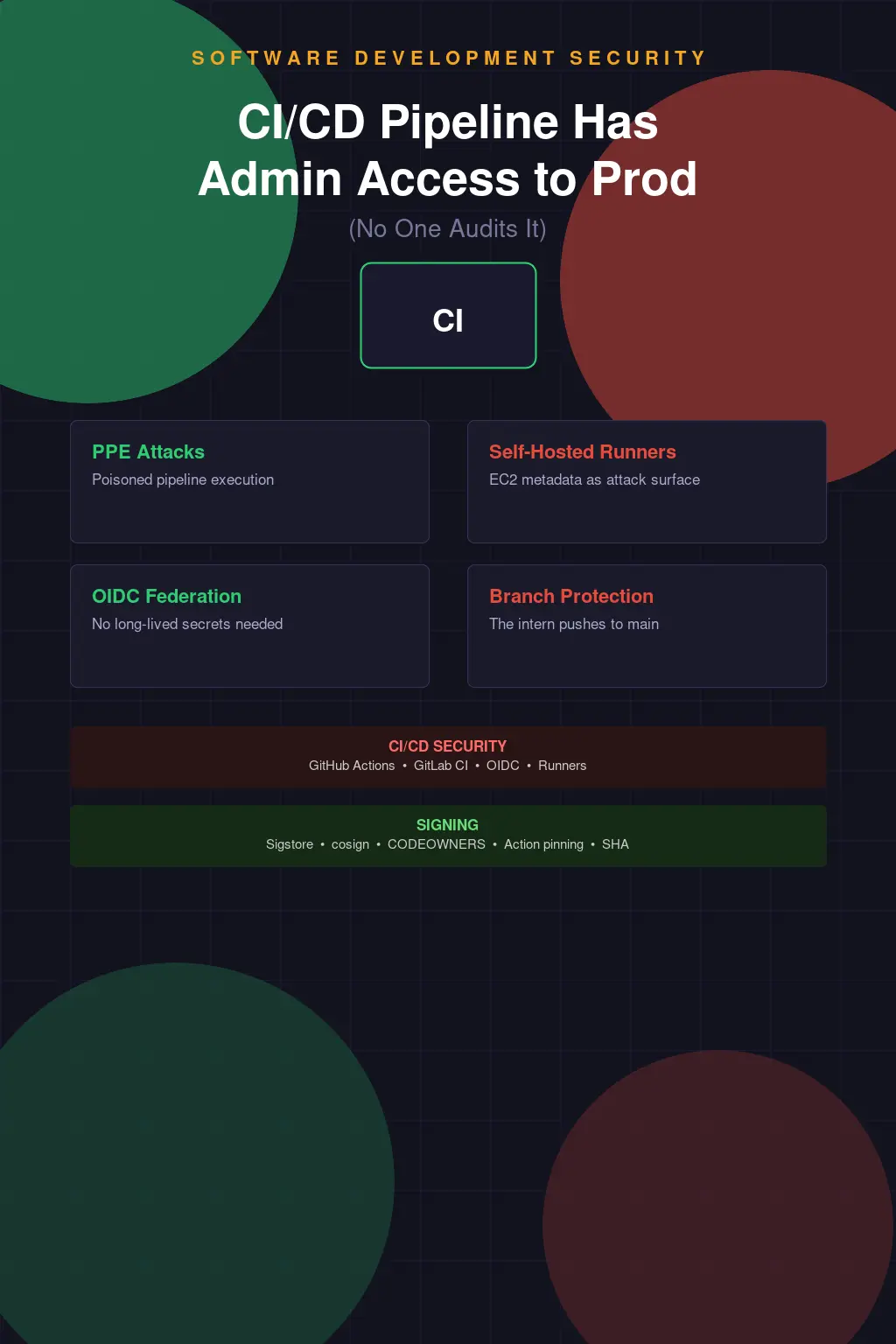

Your CI/CD Pipeline Has Admin Access to Production and No One Audits It

Here's a scenario that plays out in organizations of every size: a developer joins the team, gets contributor access to the repository, and within a week they've discovered that the GitHub Actions workflow they're now modifying has an AWS_ACCESS_KEY_ID and AWS_SECRET_ACCESS_KEY stored as repository secrets — and those credentials have AdministratorAccess to the production AWS account. Not ReadOnly. Not a scoped deployment role. AdministratorAccess. Because that's what the engineer who set this up two years ago found worked when they were trying to get the deployment unblocked, and nobody ever went back and tightened it.

The pipeline is trusted. That's the assumption baked into every CI/CD system: code that makes it through the pipeline is good code, and the pipeline itself is a safe execution environment. That assumption used to be reasonable when CI was a Jenkins box in the corner running builds that didn't touch production directly. It's not reasonable anymore. Modern CI/CD pipelines are privileged automation systems with direct access to production infrastructure, and they're treated with a fraction of the security scrutiny applied to actual production systems. They shouldn't be.

What a Compromised Pipeline Actually Gets You

Poisoned Pipeline Execution — the PPE attack class that researchers at Cider Security documented and named — is about one thing: an attacker who can influence the pipeline's execution environment can leverage whatever trust the pipeline has been granted. That might mean modifying a workflow YAML file in a branch they control. It might mean submitting a pull request that modifies build scripts. It might mean compromising a dependency that gets pulled in during the build. If the pipeline runs their code, they inherit the pipeline's access.

Self-hosted runners are the highest-risk variant of this. GitHub-hosted runners give you an ephemeral, isolated environment that's torn down after each job — an attacker who compromises code running on a hosted runner gets the job's secrets and whatever network access the runner has, but they can't persist to subsequent jobs through the runner environment itself. A self-hosted runner is a persistent machine. It might be an EC2 instance with an attached IAM instance profile. It might be a Kubernetes pod running with a service account. It might be a physical build server that someone set up three years ago and hasn't been patched since. Any code that runs on it runs in that persistent context, with whatever credentials and network access that context has.

I've seen self-hosted runner environments where the runner process was running as root on an EC2 instance with an instance profile that had broad IAM permissions. The logic was: the runner needs to be able to do deployments, so it needs permissions. Nobody had thought through what happens if a build job exfiltrates those credentials. Nobody had thought through the metadata endpoint — curl http://169.254.169.254/latest/meta-data/iam/security-credentials/ — and what it returns for an instance profile with AdministratorAccess. It returns temporary credentials that work immediately. That's not an advanced technique. It's in the AWS documentation.

OIDC Federation and Why You Should Have Done This Already

OIDC-based federation between CI/CD platforms and cloud providers has been available in GitHub Actions since late 2021, in GitLab CI since around the same time, and the AWS, Azure, and GCP implementations are all mature and well-documented. The premise: instead of storing long-lived cloud credentials as CI secrets, you configure the cloud provider to trust identity tokens issued by GitHub (or GitLab), and the pipeline assumes a role by exchanging a short-lived OIDC token for temporary credentials. No secret to rotate. No secret to leak. No static credentials sitting in repository settings waiting to be discovered.

The conditions you can enforce on OIDC-issued credentials are granular. You can restrict them to specific repository names, specific branches, specific workflow filenames. An OIDC token issued for a PR from a fork can be blocked from assuming production roles. An OIDC token from the main branch deploy workflow can be allowed to assume a specific deployment role with exactly the permissions required for that deployment and nothing else. This is significantly better than the alternative, and it's not particularly complicated to implement. The AWS documentation for GitHub Actions OIDC is clear and has working examples. There's no good reason to still be using long-lived access keys for CI/CD cloud access in 2024.

The resistance I encounter when pushing this is usually about perceived complexity or concern about workflow disruption. "We'd have to update all our workflows." Yes. You would. That's fine. This is the kind of migration that has a very high security payoff for a manageable engineering investment. Do it in phases — start with the workflows that have the most privileged access — but do it.

Branch Protection Isn't Just About Code Quality

Branch protection rules exist in most people's mental model as a code quality gate: require PR reviews, require status checks to pass, prevent force pushes. That's all true and those are all good reasons to enable them. But branch protection is also your primary mechanism for ensuring that the privileged deployment path — the workflow that runs on merge to main and does the actual production deployment — can't be triggered by arbitrary code.

When branch protection is misconfigured or absent, an attacker with repository write access can push directly to main and trigger a production deployment that runs their code with full pipeline privileges. Repository administrators can bypass branch protection by default in most platforms. If your repository admin list is long — and it usually is, because "just make them an admin" is the path of least resistance when someone needs access — you have a lot of people with the ability to bypass the controls you think you have.

The intern who can push to main is not a hypothetical. I've been on teams where developer onboarding gave every new hire write access to the main application repository and nobody thought carefully about what that meant for pipeline execution. The assumption was that peer review and social pressure would prevent bad merges. That assumption might hold for accidental quality issues. It doesn't hold for an insider threat scenario or for an account that's been compromised through phishing. Your branch protection strategy is your pipeline access control strategy. Treat it accordingly.

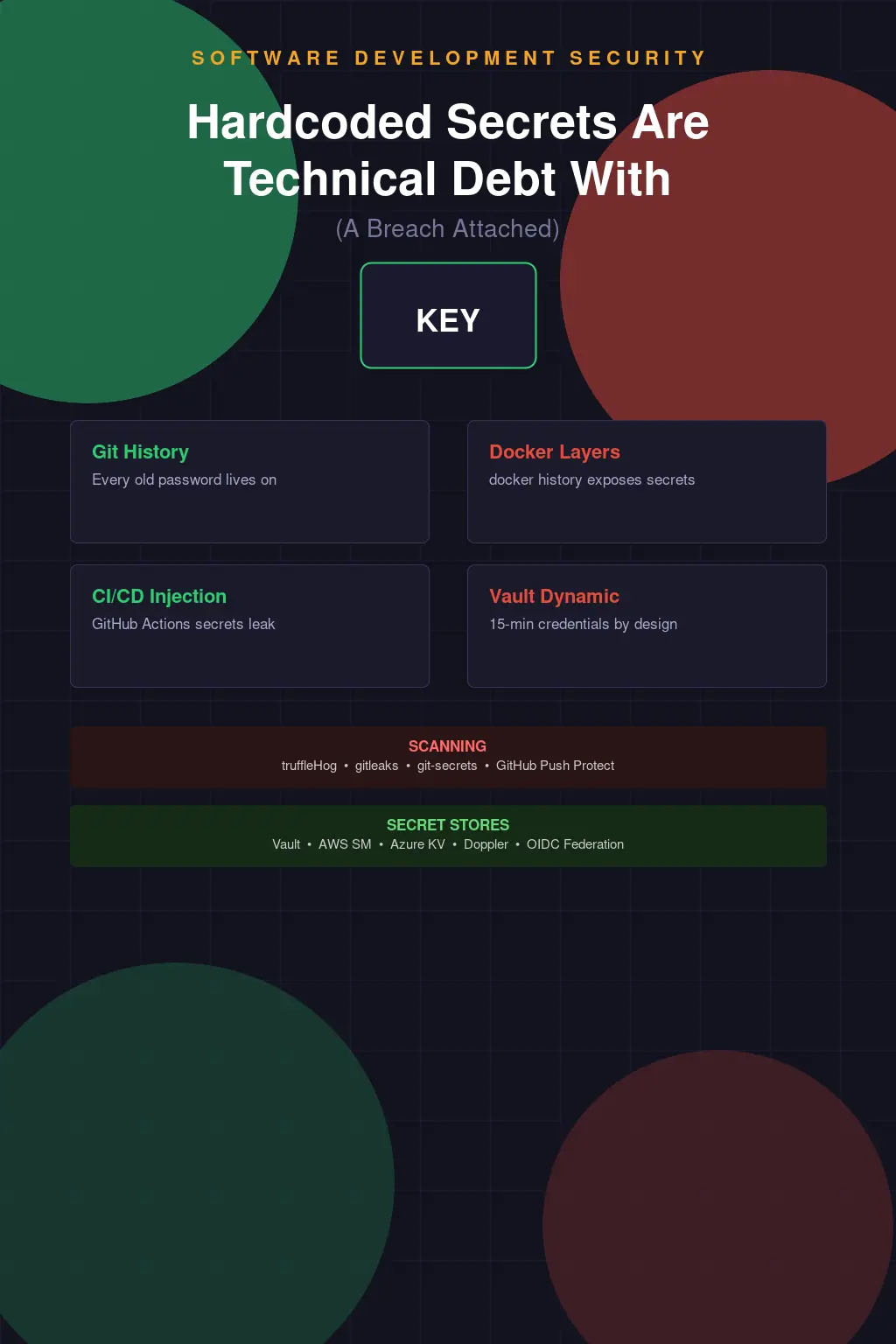

CI Secret Sprawl and the Inventory Problem

Most organizations have no comprehensive inventory of what secrets exist in their CI/CD systems, what access those secrets grant, or whether they're still in use. Repository-level secrets, organization-level secrets, environment-level secrets, secrets in Vault integrated via a workflow step, secrets fetched from SSM Parameter Store at runtime — they accumulate over years, get copied between repositories when someone bootstraps a new service, and are never cleaned up because "we might need it." The former employee whose AWS access key is still stored as a GitHub secret, just not actively used in any current workflow, is a real class of credential that shows up in security reviews.

GitHub's secret scanning feature will flag known credential patterns that get committed to code — it won't tell you about secrets that are legitimately stored as secrets but have overly broad permissions or are no longer needed. That inventory exercise is manual and organizations avoid it because it's tedious. But if you don't know what secrets you have and what they can access, you can't meaningfully assess your blast radius if one of them is compromised.

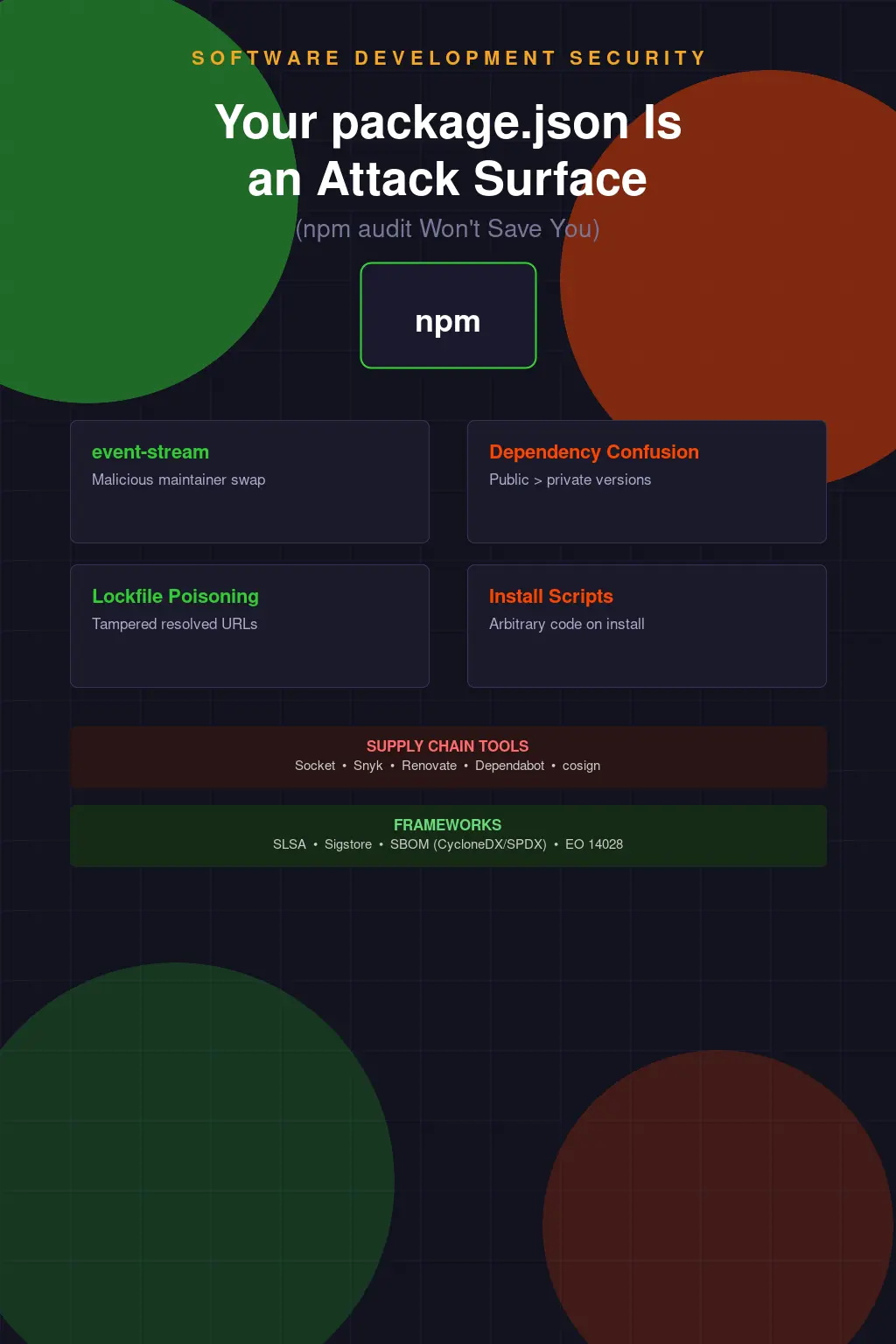

Dependabot and Renovate deserve specific mention in the context of supply chain risk. These tools automate dependency update pull requests, which is genuinely valuable for keeping dependencies current and reducing exposure to known vulnerabilities. But they also create a systematic mechanism for auto-merged changes to your dependency tree, which is exactly what a supply chain attacker needs. If you have Dependabot configured to auto-merge minor and patch updates, and a package you depend on gets compromised through a maintainer account takeover, the malicious update can land in your production build without human review. This isn't a reason not to use Dependabot — it's a reason to be thoughtful about auto-merge policies and to have integrity checking on what actually ends up in your build artifacts.

Artifact Signing and the Supply Chain Trust Problem

Sigstore and cosign have made artifact signing significantly more accessible than it used to be. The traditional barrier was key management — generating a signing key, securing it, distributing the public key, managing rotation. Sigstore's keyless signing model uses short-lived certificates tied to OIDC identities, with the certificate transparency log (Rekor) providing an auditable record of what was signed, when, and by what identity. You can verify that a container image was signed by a GitHub Actions workflow running in a specific repository without managing any long-term signing keys.

The adoption curve is still early. Most organizations are not signing their build artifacts and not verifying signatures at deployment time. The value proposition is real — if you're pulling base images from Docker Hub, if you're consuming artifacts from internal registries shared across teams, if you're deploying from build artifacts stored in S3, signature verification is your mechanism for asserting that what gets deployed is what the authorized build process produced and hasn't been tampered with in transit or at rest. Without it, you're trusting the storage layer and the transport layer to have maintained integrity, and those are not zero-risk assumptions.

The Kubernetes ecosystem is moving toward admission controllers that enforce artifact verification — OPA Gatekeeper and Kyverno can both be configured to require signed images with verified provenance. This is where the ecosystem is heading. Implementing it today means you're building toward a standard that will likely become a requirement rather than a differentiator.

Pipeline Security Review Is Not Optional

Workflow files and pipeline configuration are code. They deserve the same security review as application code — maybe more, because they execute with elevated privileges and run in a trusted context. Most code review processes don't specifically gate on pipeline changes. A PR that modifies .github/workflows/deploy.yml goes through the same review queue as a PR that fixes a typo in a README, which means it's likely to get a quick approval from whoever happens to be in the rotation.

What you want is an explicit policy that pipeline configuration changes require review from people who understand the security implications — who can look at a new workflow step and ask whether it needs the permissions it's requesting, whether it's pulling from a trusted action version, whether it's leaking credentials through environment variables or log output. GitHub's CODEOWNERS mechanism can enforce reviewer requirements on specific paths. Use it for your workflow directory.

GitHub Actions' third-party action ecosystem is another surface that doesn't get enough scrutiny. Actions from third parties run in your workflow context with access to your workflow's secrets. There's a well-established community norm of pinning actions to a specific commit SHA rather than a mutable tag — uses: actions/checkout@v4 is a mutable reference to whatever SHA that tag currently points to; uses: actions/checkout@11bd71901bbe5b1630ceea73d27597364c9af683 is a specific, auditable commit. The difference matters because a tag can be moved after you review it. A SHA cannot. Pin your actions. It's a one-time effort and it eliminates a meaningful supply chain risk.

The Trusted Pipeline Is an Assumption Worth Challenging

The mental model shift that makes CI/CD security click is treating the pipeline as an adversarial boundary rather than a trusted internal system. Every input to the pipeline — source code, dependencies, base images, external actions, secrets from third-party systems — is a potential injection point. Every output — deployment artifacts, production changes, API calls made during the build — represents real-world impact. The pipeline is an automation layer that bridges developer intent to production execution, and anywhere that bridge can be hijacked represents a privilege escalation from developer access to production access.

That's not a reason to slow down deployments or add bureaucratic process to every merge. It's a reason to think carefully about the architecture: where are the trust boundaries, what privileges exist at each stage, how are those privileges scoped, and what would it take for an attacker to abuse them. The organizations that are getting this right aren't deploying slower — they're deploying with better-defined privilege models, shorter-lived credentials, and detection in place to notice when the pipeline does something unusual. Velocity and security aren't in tension here if you design for both from the start.