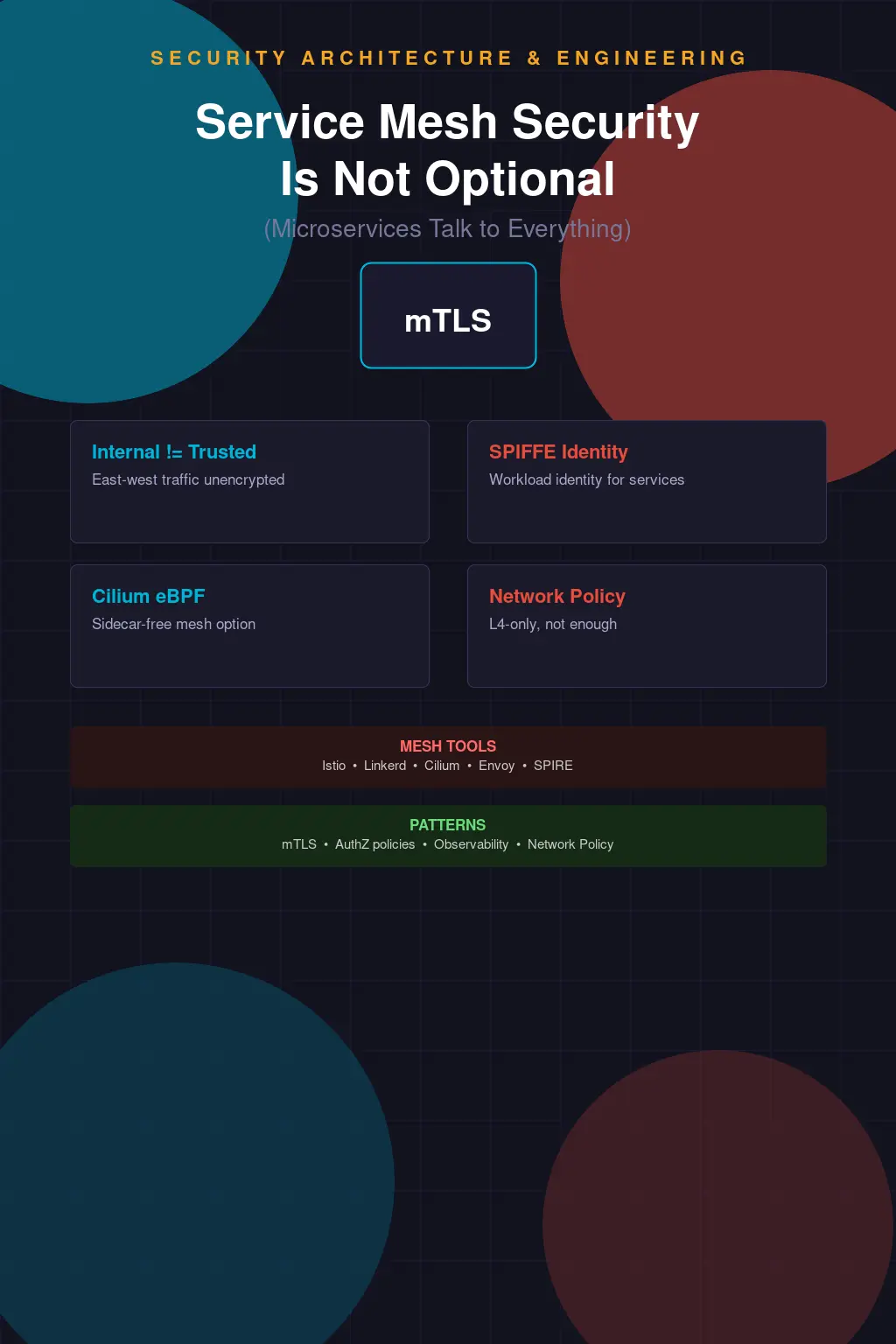

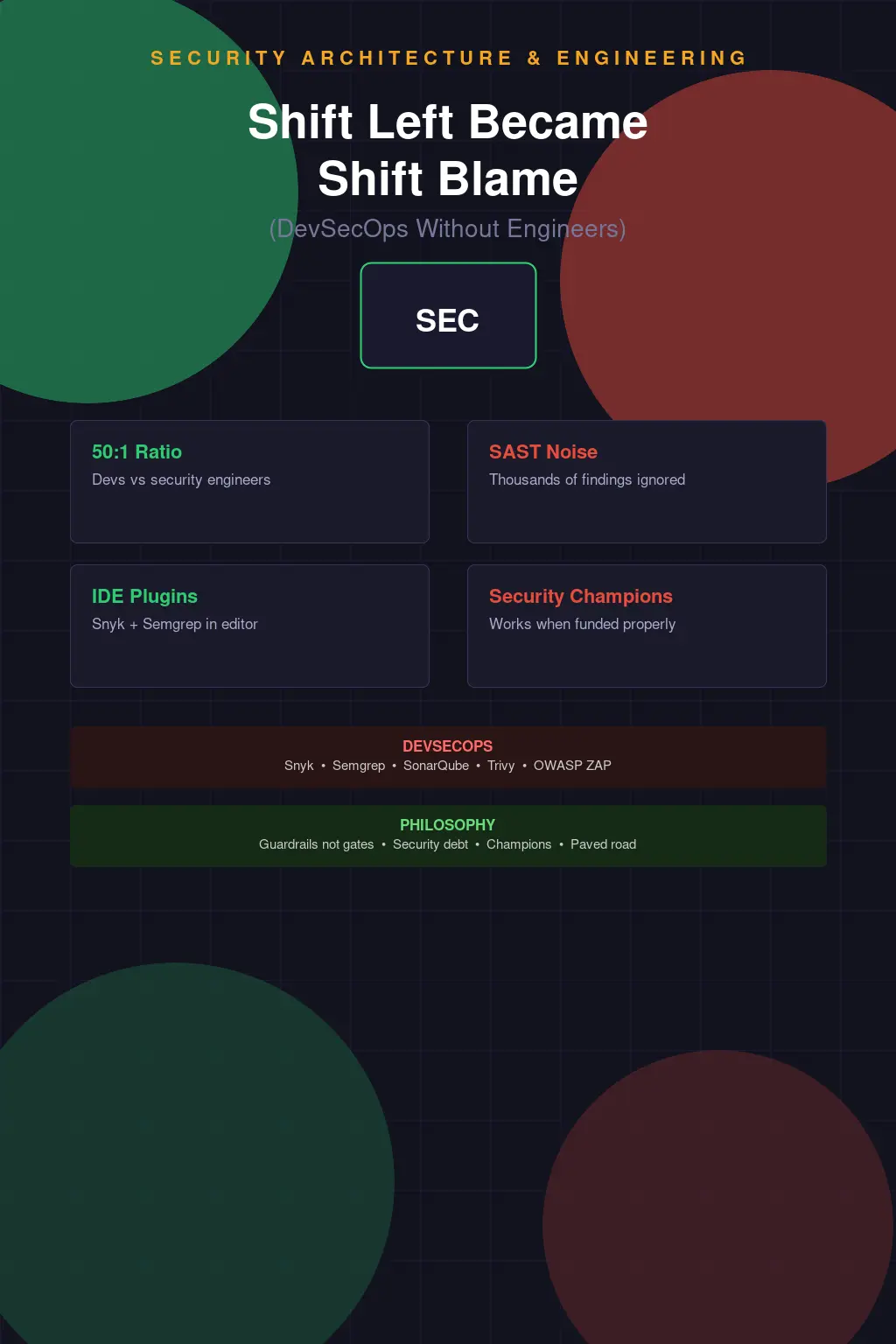

Service Mesh Security Is Not Optional When Your Microservices Talk to Everything

The most dangerous sentence in microservices architecture is "it's internal traffic." I've heard it in design reviews, in threat modeling sessions, in post-incident discussions where someone is explaining why a compromised service was able to make API calls to seventeen other services before anyone noticed. Internal traffic. Trusted network. The firewall is at the perimeter so everything inside is fine. This assumption was questionable in monolithic architectures. In microservices, where every function is a network call and your application's attack surface is now distributed across dozens of services with their own codebases and their own vulnerabilities, it's actively dangerous.

The east-west traffic problem is simple to state: when a service in your cluster is compromised — through a vulnerability in its code, a dependency, a misconfiguration — it has network-level access to everything else in that cluster that it can reach. Without explicit service-to-service authorization, "can reach" means "can call." The compromised payment service can call the user service. The compromised user service can call the admin service. The compromised anything-with-network-access can enumerate, probe, and pivot through your internal APIs using the same paths your legitimate services use. This is lateral movement, and in a microservices environment without proper controls, it's trivially easy.

A service mesh solves this by inserting a proxy — a sidecar container running alongside each service — that intercepts all incoming and outgoing traffic and enforces policy. Mutual TLS (mTLS) between services means every connection requires both parties to present certificates, proving identity. Authorization policies define which services are allowed to call which other services, on which paths, with which methods. Traffic that doesn't match an authorization policy gets rejected at the proxy, not inside the application. The service doesn't even receive the request.

mTLS Is Not Just Encryption — It's Identity

People focus on the encryption half of mTLS and underweight the identity half. Yes, mTLS encrypts traffic between services — that matters for regulatory compliance and defense in depth. But the more important property for lateral movement defense is authentication: in a properly configured mTLS environment, every service presents a cryptographic identity and every service can verify the identity of who's calling it. That identity is then available to authorization policy engines.

Istio achieves this through SPIFFE (Secure Production Identity Framework for Everyone) — an open standard for workload identity. Each service gets a SPIFFE Verifiable Identity Document (SVID), which is essentially an X.509 certificate encoding the service's identity in a URI format: spiffe://cluster.local/ns/payments/sa/payment-service. That identity is cryptographically bound to the workload through the certificate and the CA that issued it. Istio's Citadel component manages certificate issuance and rotation automatically. From an operational standpoint, you don't manage service certificates manually — the mesh handles it.

Linkerd takes a lighter-weight approach than Istio. It doesn't have the full breadth of traffic management features — no traffic shifting, no sophisticated fault injection, less extensible policy model — but its operational simplicity is genuinely compelling. Linkerd's data plane is written in Rust, which means the memory safety story is better than Envoy's C++, and the proxy overhead is measurably lower. For teams who want mTLS and basic authorization without signing up for Istio's operational complexity, Linkerd is worth serious consideration. The "which mesh" question doesn't have a universal answer, but "do you need a mesh" increasingly does.

The Sidecar Overhead Debate

The objection I hear most often from platform engineering teams when service mesh comes up is latency. Adding a sidecar proxy to every service means every service-to-service call passes through two proxy instances — one on the caller's side, one on the callee's side. There's real overhead there: memory per sidecar, CPU for TLS termination, and added latency per hop. The question is whether that overhead is acceptable, and the honest answer is that it depends on your traffic patterns and your latency requirements.

For most services, Istio's Envoy-based sidecar adds somewhere in the single-digit milliseconds range to each call. Linkerd's Rust proxy adds less. In a microservices architecture where you might have five or ten service hops per user request, that adds up. If you're running a high-frequency trading system or a real-time gaming backend where every millisecond counts, the overhead matters and you need to measure it carefully in your environment before committing. For most enterprise workloads — internal APIs, data processing pipelines, B2B SaaS — the overhead is measurable but not meaningful compared to the security value.

eBPF-based approaches like Cilium change this tradeoff. Rather than running a sidecar proxy as a separate container, Cilium implements network policy and observability directly in the Linux kernel using eBPF programs. No sidecar. No additional container per pod. No TCP proxy adding latency. The eBPF programs hook into the kernel's network stack and enforce policy before packets even reach userspace. The performance profile is dramatically better, and for teams with the Kubernetes and Linux expertise to operate it, Cilium's Network Policy model — which extends standard Kubernetes NetworkPolicy with layer 7 (HTTP) awareness — provides a lot of the east-west security value without the sidecar complexity.

Cilium's sidecar-free model comes with tradeoffs. You lose the rich traffic management features that come with Envoy-based meshes — no sophisticated routing, no service-level metrics generated by the proxy. You gain performance and operational simplicity. If your primary concern is network-level access control and you don't need Istio's traffic management capabilities, Cilium is worth evaluating seriously. The two approaches aren't mutually exclusive either — some organizations run Cilium for network policy and either Linkerd or Istio for service-level mTLS and observability.

Kubernetes NetworkPolicy Is Not a Service Mesh

Standard Kubernetes NetworkPolicy is underused and commonly misunderstood. It operates at Layer 3/4 — IP addresses and ports — and it can enforce that only certain pods can talk to certain other pods. That's legitimately useful and it's the right first step before you get to a full service mesh. But it has important limitations that people discover when they need to do real authorization.

NetworkPolicy can say: pods in the payments namespace can receive connections on port 8080 from pods in the api-gateway namespace. It cannot say: only the checkout workflow endpoint on the payment service should be callable by the gateway, not the admin endpoint. That layer-7 granularity — HTTP method, path, headers — requires either a service mesh or an eBPF solution like Cilium with L7 policy enabled. Standard NetworkPolicy with L4 rules is better than nothing. It's not a substitute for service-level authorization.

The other critical gap in NetworkPolicy is that it defaults to allow-all. A cluster with no NetworkPolicy defined allows all pod-to-pod communication. This is what most clusters look like before anyone gets around to writing policies. Getting to a deny-by-default posture — where you've explicitly defined what's allowed and everything else is blocked — requires writing policies for every service, which is tedious and gets out of sync as services change. Service meshes help here because the authorization policy engine is centralized and the certificate-based identity means you're working with service names rather than IP ranges that change as pods restart.

Observability as a Security Tool

One of the underappreciated benefits of service mesh is the observability it provides by default. Every service mesh worth using generates metrics and traces for every service-to-service call: latency, error rates, request volumes, source and destination service identities. This is valuable for performance monitoring, but it's also a security telemetry source that most teams haven't fully exploited.

Distributed tracing, when implemented well, creates a graph of service interactions for every request. That graph tells you exactly which services called which other services, in what order, with what latency, for any given trace. An attacker performing lateral movement — systematically calling services they shouldn't be touching — shows up as anomalous patterns in that graph: a service that normally only calls two downstream dependencies suddenly calling twelve, or a service calling the admin API when the distributed trace shows the request originated from a context that never reaches that code path in normal operation.

I've seen this catch real problems. Not sophisticated APT activity — but misconfigurations and unexpected code paths that, if they were actual attacks, would represent meaningful exposure. A service calling a database migration endpoint that should only be reachable from the migration job. A service making calls to an internal metrics endpoint that had more information exposure than anyone realized. The observability the mesh provides as a byproduct of its traffic management function creates a behavioral baseline that's actually useful for anomaly detection, which is more than you can say for most security tooling that requires explicit configuration to do anything useful.

When You Need It vs. When You Don't

I'm not going to tell you that every microservices deployment needs a full service mesh on day one. If you have three services, a mesh adds complexity that's probably not worth it yet. If you're building something new and you're not sure what your service topology will look like in a year, getting the foundations right — mTLS from the start, explicit NetworkPolicy, Kubernetes RBAC locked down — is more valuable than committing to Istio before you know your requirements.

The inflection point where mesh becomes clearly worth the complexity investment is roughly when you have enough services that manually managing service-to-service trust becomes error-prone, or when you have compliance requirements around encryption in transit for internal traffic, or when you've had an incident or near-miss where lateral movement was possible and you're now motivated to do something about it. That last one is unfortunately how most organizations end up here — reactive rather than proactive.

The complexity tax of service mesh is real and shouldn't be minimized. Istio's configuration surface area is enormous. Debugging mTLS failures requires understanding certificate chains, SPIFFE identity formats, and Envoy's access log format. Upgrades require careful attention to control plane and data plane version compatibility. The teams that run service meshes successfully either have platform engineering support dedicated to the infrastructure layer or they've invested in deep expertise in the specific mesh they're running. Adopting Istio and then leaving it to individual application teams to figure out is a recipe for misconfiguration that defeats the security purpose of having it.

The "internal traffic is trusted" assumption will continue to get organizations in trouble until the industry normalizes explicit service-to-service authorization as a baseline requirement rather than an advanced feature. We've normalized TLS for external traffic — nobody ships a public API over HTTP anymore without getting called out for it immediately. The same norm needs to develop for internal traffic. mTLS between services, explicit authorization policies defining which services can call which, and observability that creates a behavioral baseline should be table stakes for microservices deployments, not aspirational architecture goals. The tooling exists. The operational patterns are established. The cost of not doing it, measured in post-compromise blast radius, is high. There's no good argument for the fallacy that the network is still a trust boundary in 2024.